Nine years ago, a large amount of methane was discovered in

the Martian atmosphere. Because methane doesn’t stay in the atmosphere for

long, something must be replenishing it.

On Earth, it’s predominantly living organisms that do the replenishing.

Although that's a possible source for Mars' methane as well, an inorganic origin seems more likely. Two years ago, I wrote that cosmologists had ruled out

meteorites as that source.

Since then, Frank Keppler and a team of researchers from the

Max Planck Institute for Chemistry, Utrecht University, the University of

Edinburgh and the University of West Hungary have been successful in tracing

the Martian methane to its source. They found that the methane comes from... meteorites. Yes, you read that right.

The cosmologists exposed the Murchison meteorite, a large

meteorite that landed in Australia in 1969, to UV radiation at levels

comparable to those found on the surface of Mars. Because Mars has no ozone layer, these levels are much higher than those on Earth.

Exposing the meteorite to UV radiation released large amounts of methane. Isotope analysis confirmed that the methane released from the Murchison meteorite was of extraterrestrial origin. In addition, the amount of methane released increased with increasing temperature. This correlates well with methane concentrations on Mars, where more methane is found in the warmer regions. The Murchison meteorite is thought to be of similar composition to the myriad meteorites that bombard Mars every day.

Exposing the meteorite to UV radiation released large amounts of methane. Isotope analysis confirmed that the methane released from the Murchison meteorite was of extraterrestrial origin. In addition, the amount of methane released increased with increasing temperature. This correlates well with methane concentrations on Mars, where more methane is found in the warmer regions. The Murchison meteorite is thought to be of similar composition to the myriad meteorites that bombard Mars every day.

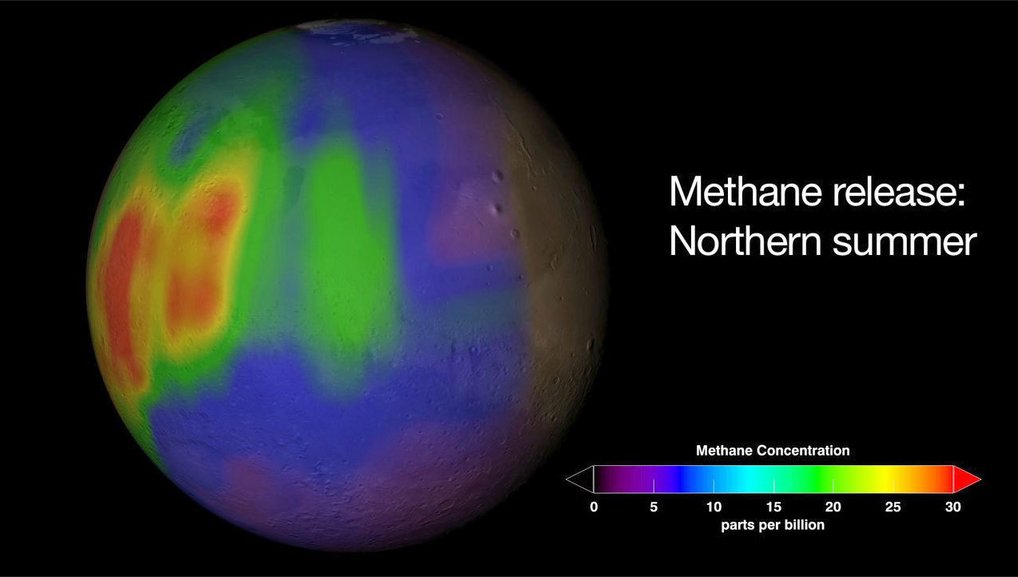

Methane concentration on Mars:

This chart depicts the calculated methane concentrations in parts per billion

(ppb) on Mars during summer in the Northern hemisphere. Violet and blue are

indications for little quantities of methane, red areas for

larger ones.

© NASA.

So why did I report previously that the methane could not

have come from meteorites? In the prior study, cosmologists looked at how much

methane could be released from meteorites as they ablated, or burned up, in the

atmosphere. Apparently not that much. However, meteorites that make it to the

ground and are subsequently pummeled with UV radiation can and do give off

significant amounts of methane.

And thus, science marches on.

And thus, science marches on.